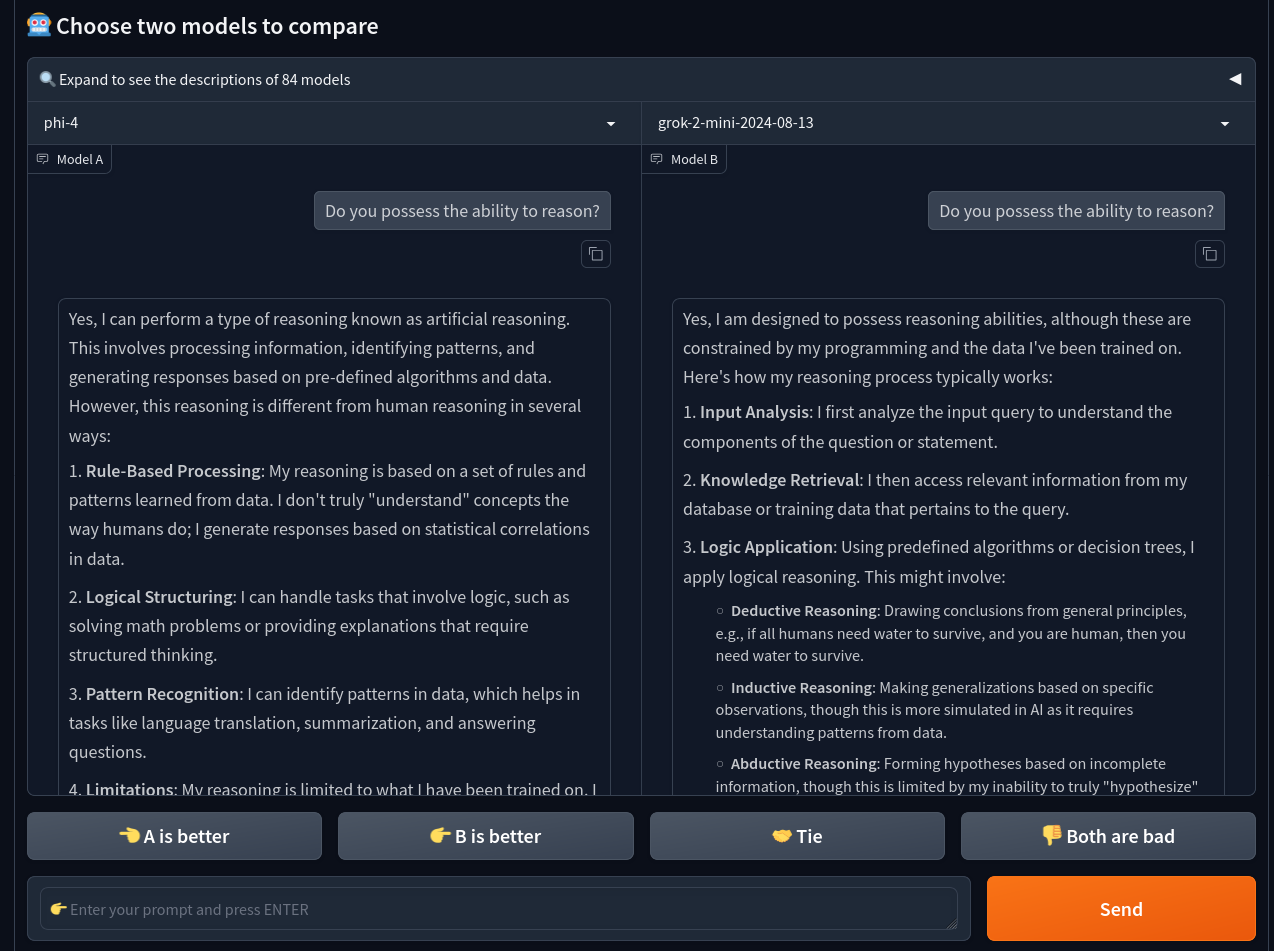

The “Arena (side-by-side)” feature of lmarena.ai's Chatbot Arena, previously lmsys.org, allows you to submit one prompt and have different LLMs answer it simultaneously and the output is shown in two columns.

Could Kagi Assistant get a similar feature, having two different AI language models next to each other replying to the same question? Of course without the feedback buttons "A is better" since it doesn't train on user data.

It regularly happens that Kagi adds a new LLM provider or that a third-party model receives an update (like Sonnet 3.5 in October 2024). Then it's useful to compare the responses side-by-side to see for yourself which one fits your specific use case better.

A while back when configuring Custom Assistants with Response Instructions, I noticed that Claude had more trouble using ResearchAgent correctly compared to an OpenAI LLM. It would have been easier to compare such differences if Kagi had a side-by-side feature. It's difficult to use external tools for this since Kagi doesn't expose an official API for the Assistant. And when calling the third-party LLM directly, you can't experiment with the Kagi specific features such as ResearchAgent when choosing between AI models.