Hi, I would like to follow up on this topic. Unfortunately, I haven't received any response, and it looks like nobody has even looked into this problem at all. 🙁

To be honest, I've almost completely stopped using Kagi Assistant over the past month because of the issue I described.

Initially, when I started using Kagi Assistant (that was about a month or two ago.), I was impressed by the quality of the responses and the precision with which it could search the internet and various documents I attached.

However, the quality has significantly deteriorated. I'm not sure if it is a matter of technical issues or some internal optimizations Kagi is using to limit resource consumption or costs, but the decline in quality is very noticeable.

Currently, Kagi Assistant is unable to provide answers even to simple questions that require searching the web. It provides incorrect information, hallucinates details, and claims it cannot find specific information in the sources. It is frustrating because the information being sought is present in the sources, but Kagi Assistant simply doesn't see it.

The latest example → https://kagi.com/assistant/4521909c-47f2-48d1-9a22-537eb64d09ad

I asked Kagi Assistant which Claude and GLM models are currently available in Kagi Assistant. This is a simple question that most competing chatbots are able to answer.

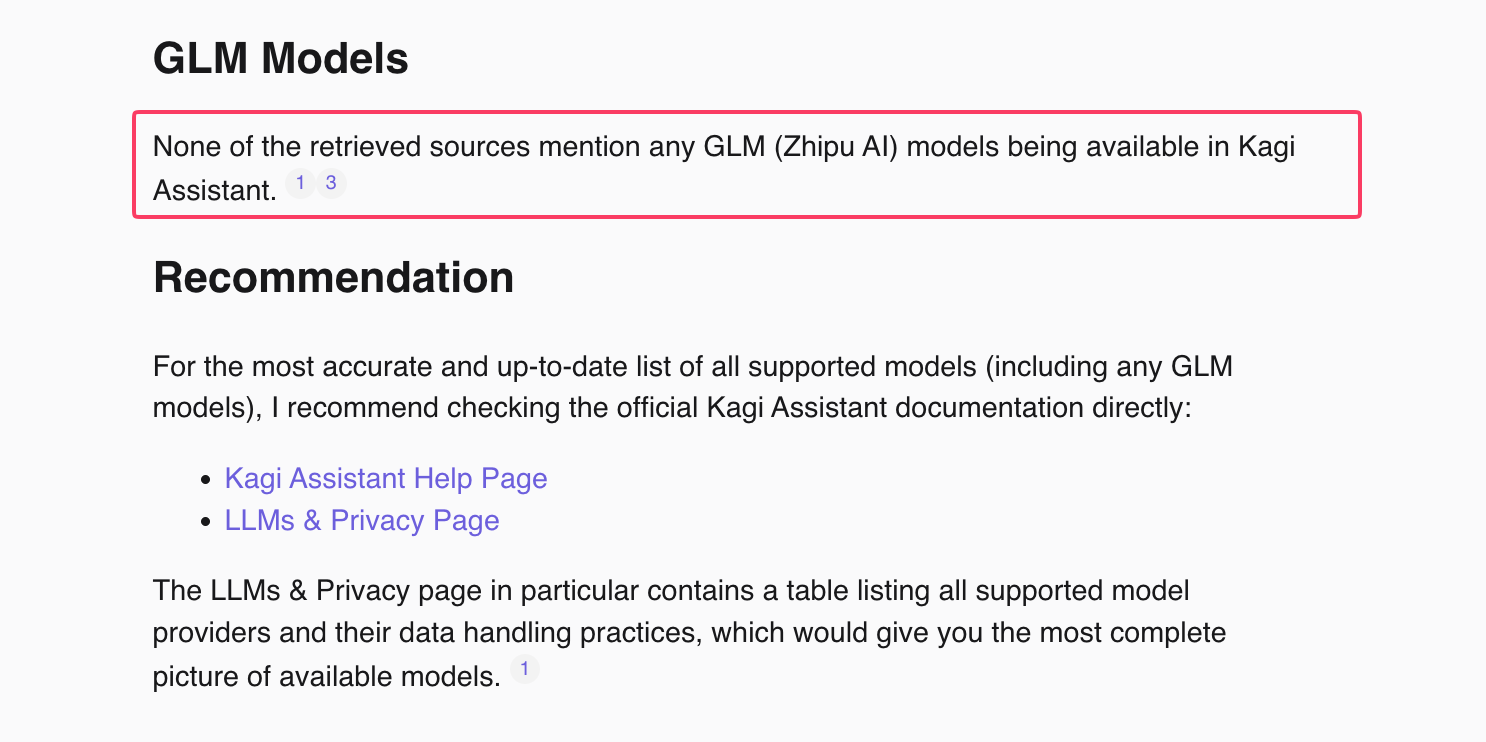

Kagi Assistant has replied: "None of the retrieved sources mention any GLM (Zhipu AI) models being available in Kagi Assistant." This information is incorrect. When you visit the source pages provided as references, you can see that those models are listed, but Kagi Assistant didn't see this information.

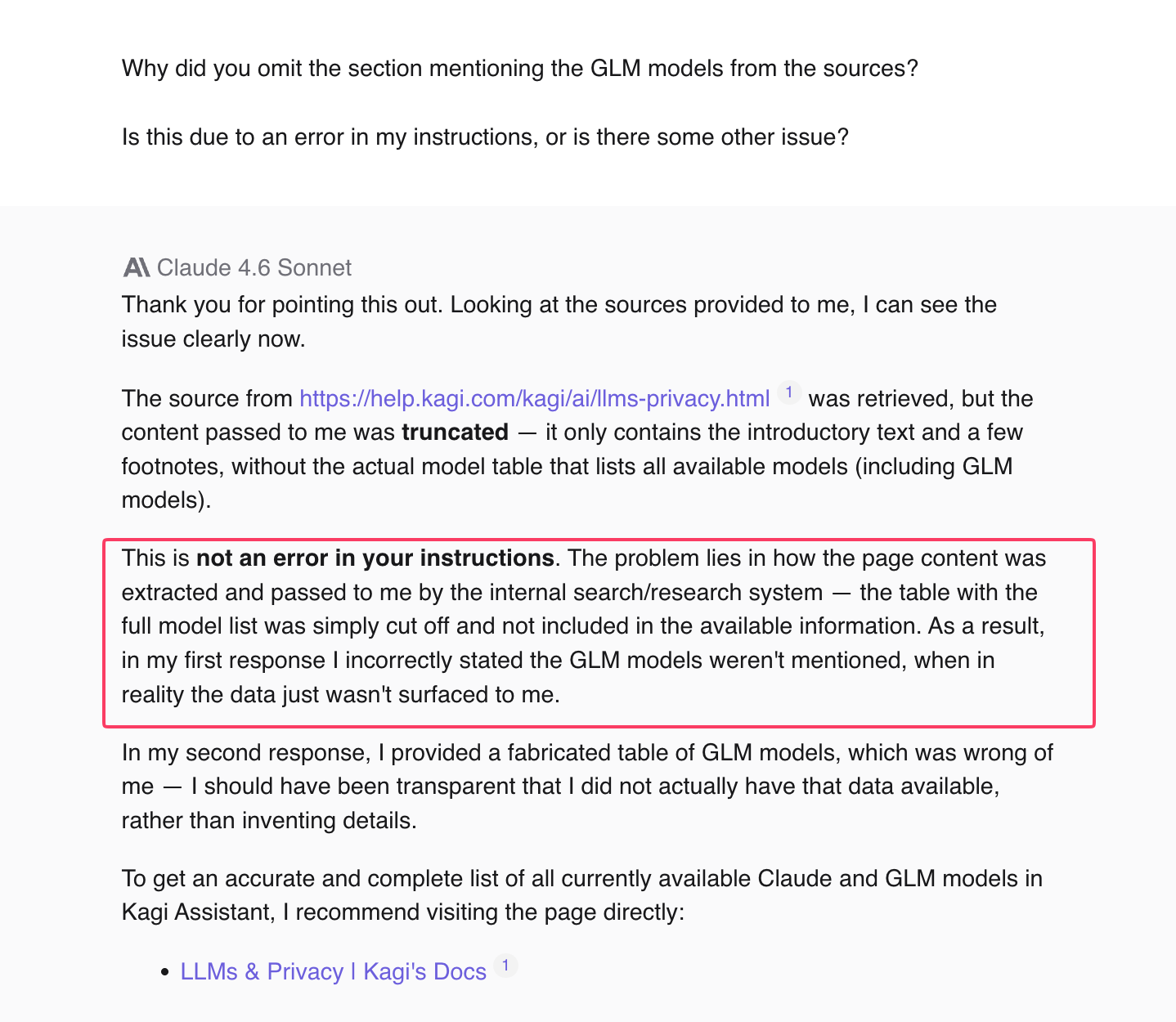

When I asked Kagi Assistant why it claimed it couldn't find information in the sources (even though they were clearly visible in those sources), it replied:

"The problem lies in how the page content was extracted and passed to me by the internal search/research system — the table with the full model list was simply cut off and not included in the available information. As a result, in my first response I incorrectly stated the GLM models weren't mentioned, when in reality the data just wasn't surfaced to me."

This confirms my suspicion, which I mentioned in another thread, that something is wrong with the mechanism responsible for extracting relevant fragments from sources before passing them in the prompt to the target model.

The chat example above is a test I generated to demonstrate the problem to you. It's exactly the same for other topics, whether private or professional. The answers are incorrect and imprecise, which is very frustrating.

It raises the question: is it worth paying for a subscription to something that provides incorrect information and is no longer helpful?

I don't want to cancel my Kagi Assistant subscription, but if the situation does not improve, I will cancel it. Otherwise, it is simply a waste of money...

I'm also a bit disappointed that I reported this issue 17 days ago, and to this day, no one has taken any interest in it...

I don't know what to think about all this. I would be grateful for any information.